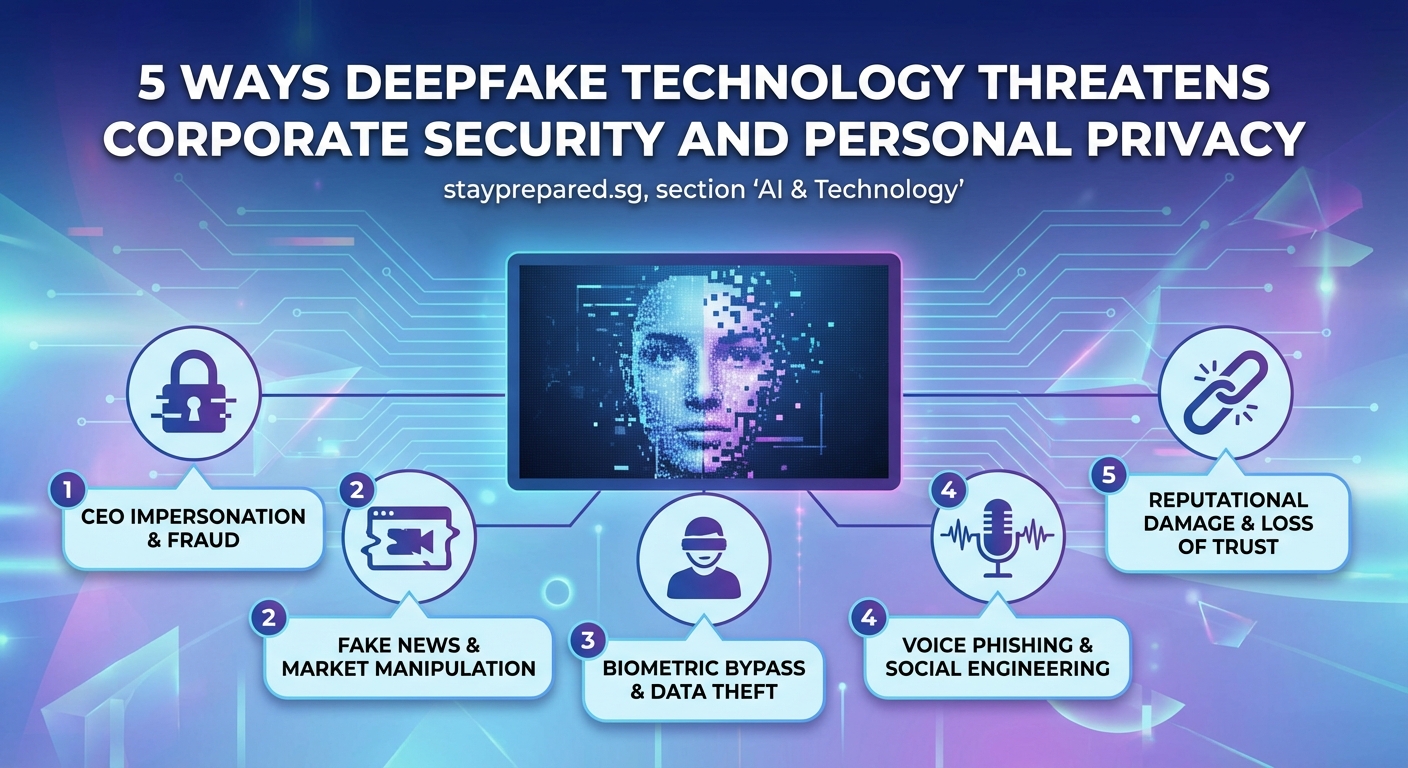

Deepfake technology has moved from novelty to serious business threat in less than five years. What started as face-swapping apps has evolved into sophisticated fraud tools capable of fooling executives, draining bank accounts, and destroying reputations. Corporate security teams now face attackers who can clone voices in seconds and create convincing video impersonations with minimal effort.

Deepfakes pose escalating risks to corporate security through CEO fraud, credential theft, and reputation attacks. Organizations need multi-layered defenses including employee training, voice authentication protocols, and AI detection tools. Security teams must treat deepfakes as a critical threat vector requiring immediate policy updates and technical controls to prevent financial loss and data breaches.

How Deepfakes Breach Corporate Defenses

Traditional security training teaches employees to watch for spelling errors and suspicious email addresses. Deepfakes bypass these safeguards entirely.

A finance manager receives a video call from the CEO requesting an urgent wire transfer. The face matches. The voice sounds right. The background shows the executive’s actual office. Every visual cue checks out. The manager approves the transfer. Twenty minutes later, the real CEO walks past her desk.

This scenario played out at a Hong Kong company in 2024, resulting in a $25 million loss. The attackers used publicly available video footage to create a convincing deepfake during a video conference call with multiple participants.

The technology barrier has collapsed. Tools that required specialized knowledge three years ago now run on consumer hardware. Anyone can clone a voice using three seconds of audio. Face-swapping software operates in real time during video calls.

Five Attack Vectors Targeting Organizations

Corporate security professionals need to understand how attackers deploy deepfakes against businesses.

Executive Impersonation and Financial Fraud

CEO fraud has existed for years through email spoofing. Deepfakes make these attacks exponentially more convincing.

Attackers gather source material from earnings calls, conference presentations, and social media videos. They create audio or video deepfakes that mimic executives requesting transfers, approving contracts, or sharing sensitive information.

Finance teams trained to verify email requests often trust video confirmation. The psychological impact of seeing and hearing a familiar executive overrides standard verification protocols.

Credential Harvesting Through Fake IT Support

Help desk impersonation represents another growing threat vector. Attackers create deepfake audio of IT managers calling employees to “verify credentials” or “update security settings.”

The fake IT representative sounds exactly like the real manager. Employees who would never respond to a phishing email willingly provide passwords over the phone to someone they believe is their supervisor.

Reputation Attacks and Market Manipulation

Public-facing executives become targets for deepfake campaigns designed to damage stock prices or brand reputation.

A fabricated video showing a CEO making racist comments or announcing false financial troubles can spread across social media in minutes. Even after debunking, the damage persists. Share prices drop. Customers lose confidence. Competitors gain advantage.

Meeting Infiltration and Espionage

Attackers compromise employee accounts and join virtual meetings using deepfake video and audio. They appear as legitimate participants, gathering intelligence about projects, strategies, and vulnerabilities.

In hybrid work environments where not everyone knows each other by sight, a convincing deepfake can participate in sensitive discussions for weeks before detection.

Vendor and Partner Impersonation

Supply chain attacks extend to deepfake impersonation of vendor representatives. An attacker posing as a long-term supplier requests updated payment details or asks for proprietary specifications.

The voice matches previous calls. The video shows the familiar contact. The request seems routine. The payment gets redirected to a fraudulent account.

Detection Challenges Security Teams Face

Identifying deepfakes requires new skills and tools that most security teams lack.

| Detection Method | Effectiveness | Implementation Challenge |

|---|---|---|

| Visual artifact analysis | Moderate | Requires specialized training and constant updates |

| Audio frequency analysis | High for synthetic voices | Limited effectiveness against cloned voices |

| Behavioral analysis | Variable | Needs baseline data for each executive |

| Multi-factor verification | Very high | Depends on employee compliance |

| AI detection tools | Improving | Arms race with creation tools |

Human perception fails against high-quality deepfakes. Studies show people correctly identify deepfakes only 50 to 60 percent of the time, barely better than random chance.

AI detection tools show promise but face constant evolution as creation techniques improve. What works today may fail tomorrow as attackers refine their methods.

Building Effective Defense Strategies

Organizations need layered defenses that assume deepfakes will bypass technical controls.

Verification Protocols for High-Risk Transactions

Every organization should implement mandatory verification for sensitive requests:

- Establish a secondary communication channel for all financial transfers above a threshold amount.

- Require in-person or pre-arranged phone verification for executive requests outside normal procedures.

- Create code words or authentication phrases known only to specific teams.

- Document and enforce waiting periods for urgent requests that bypass standard approval workflows.

These protocols work because deepfake attackers rely on urgency and authority to prevent verification. Slowing down the process breaks their attack model.

Employee Training Beyond Phishing Awareness

Security awareness programs need updates to address deepfake threats:

- Show employees examples of high-quality deepfakes to calibrate their threat perception.

- Teach the difference between email spoofing and multimedia impersonation.

- Practice verification protocols through simulated deepfake attacks.

- Encourage healthy skepticism even when visual and audio cues seem legitimate.

- Reward employees who follow verification procedures, especially under pressure.

Training should emphasize that trusting your eyes and ears is no longer sufficient. Verification procedures exist to protect both the employee and the organization.

Technical Controls and Monitoring

Security teams should deploy multiple technical layers:

Implement voice biometrics for authentication on sensitive systems. These analyze vocal characteristics beyond what deepfakes currently replicate well.

Deploy AI-based detection tools that analyze video calls for artifacts, inconsistencies, and unnatural movements. Update these tools regularly as detection techniques improve.

Monitor for unusual communication patterns. A CEO who never makes video calls suddenly requesting one should trigger alerts.

Restrict access to high-quality video and audio of executives. The less source material available, the harder deepfake creation becomes.

Incident Response Planning

Organizations need specific procedures for suspected deepfake incidents:

- Establish a reporting channel for employees who suspect deepfake contact.

- Create a rapid response team that can verify executive communications within minutes.

- Develop communication templates for addressing deepfake attacks that become public.

- Plan for market stabilization if deepfakes target public-facing executives.

- Coordinate with law enforcement on investigation and attribution.

“The best defense against deepfakes is creating a culture where verification is expected, not insulting. When employees feel empowered to question even the CEO’s video call, you’ve built real resilience.” – Corporate Security Researcher

Industry-Specific Vulnerabilities

Different sectors face unique deepfake risks based on their operations and public profiles.

Financial services companies handle high-value transactions that make them prime targets for deepfake fraud. Their verification protocols need the highest rigor.

Healthcare organizations face risks around patient privacy and medical decision-making. A deepfake of a doctor could authorize treatments or access sensitive records.

Technology companies possess valuable intellectual property that deepfake-enabled espionage could compromise. Their meeting security needs special attention.

Media and entertainment companies face reputation risks from deepfakes that could damage talent relationships and audience trust.

Regulatory and Legal Landscape

Laws addressing deepfakes vary widely by jurisdiction and lag behind technological capabilities.

Some regions criminalize malicious deepfake creation. Others focus on disclosure requirements. Few provide clear guidance for corporate victims seeking recourse.

Organizations operating internationally need to understand how different jurisdictions treat deepfake evidence, liability, and prosecution. Insurance policies may not cover deepfake-related losses without specific riders.

Corporate legal teams should review contracts, policies, and procedures to address deepfake scenarios explicitly. Ambiguity in existing frameworks creates liability gaps.

Investment Priorities for Security Budgets

Security leaders allocating resources should consider:

- Authentication infrastructure that doesn’t rely solely on visual or audio verification.

- Detection tools with regular updates to match evolving deepfake techniques.

- Training programs that include realistic deepfake simulations.

- Incident response capabilities specific to multimedia fraud.

- Legal and insurance review to address deepfake scenarios.

The cost of prevention remains far lower than the cost of a successful attack. A single deepfake fraud incident can exceed the annual security budget of mid-sized companies.

Preparing for Escalating Threats

Deepfake technology will continue improving. Real-time generation quality will increase. Detection will become harder. Attack volume will grow.

Organizations that treat deepfakes as a future problem rather than a current threat will find themselves unprepared when incidents occur.

Security teams should assume that convincing deepfakes of their executives already exist or can be created within hours. Building defenses from that assumption creates appropriate urgency.

The gap between creation capability and detection capability favors attackers. This imbalance will persist for the foreseeable future.

Making Your Organization Resilient

Deepfake threats corporate security teams face will only intensify as technology becomes more accessible and convincing. The organizations that fare best will be those that move beyond technical controls alone.

Build a security culture where verification is routine, not exceptional. Train employees to trust procedures over their senses. Implement authentication methods that deepfakes cannot easily bypass. Plan for incidents before they happen.

The threat is real. The technology exists today. The question is not whether your organization will face deepfake attacks, but whether you’ll be ready when they come.

Start with one concrete step this week. Update your verification protocols for financial transactions. Schedule deepfake awareness training. Test your incident response plan against a multimedia fraud scenario. Small actions today prevent major losses tomorrow.